Tags

3D Graphics, Animation, AR, Augmented Reality, Blender, Colorspaces, Colour Detection, Computer Vision, ConfigParser, OpenCV, OpenGL, Python, SaltwashAR, Text To Speech

Arkwood was burning something on the fire. Cough cough! The smoke billowed out of the back garden pit and into my lungs.

‘Put the fire out!’ I cried. ‘What on earth are you burning?’

Arkwood studied the flames intently. ‘Grandfather’s clothes.’

Turns out Grandpa’s garments were all blue – the old man’s favourite colour – and it just made Arkwood sad to see them around the house. ‘It’s time to burn,’ he repeated over and over, like a shamanic chant.

Anyhow, got me thinking. Some colour’s make us happy, some make us feel sad. So I added a new feature to SaltwashAR – my Python Augmented Reality application – so that Rocky Robot could have a happy colour.

I set her happy colour to green and started the application. Here’s how she got on:

When Rocky Robot’s favourite green teddy is close by, she is comforted.

But take it away and she cries and says “I just feel sad”. You see, there is no green colour in the webcam image, so she becomes depressed.

I placed a spinning globe in front of the webcam, but still she weeps. There is not much green on a globe of dusty land and blue sea.

So instead I put a big green plant beside Rocky Robot. Wow, she bobs her head up and down and exclaims “I’m so happy!”. All that green foliage in the webcam image has filled her metal heart with joy!

I remove the plant – she cries again.

I put her teddy back at her side and she is comforted. There is not enough green in the small teddy to make her jump with joy, but there is enough green to stop her from wailing.

I updated SaltwashAR on Github with the new feature.

‘Arkwood,’ I said, placing a sympathetic hand upon his shoulder, ‘I understand why you are burning the clothes. Seeing your Grandfather’s trousers is making you feel sad for his passing.’

Arkwood looked at me with a furrowed brow. ‘Grandpa’s not dead!’ he screamed, ‘It’s just that he keeps leaving his clothes lying around the house, after the orgies, and they smell of piss.’

Oh well, romantic notions they fade.

Ciao!

P.S.

If you are wondering how Rocky Robot detects colour, let’s have a look at the Happy Colour feature:

from features.base import *

import ConfigParser

import numpy as np

import cv2

from constants import *

class HappyColour(Feature, Speaking, Emotion):

def __init__(self, text_to_speech):

Feature.__init__(self)

Speaking.__init__(self, text_to_speech)

Emotion.__init__(self)

self._load_config()

def _load_config(self):

config = ConfigParser.ConfigParser()

config.read("scripts/features/happycolour/happycolour.ini")

# get colour range from config

self.lower_colour = np.array(map(int, config.get("Colour", "Lower").split(',')))

self.upper_colour = np.array(map(int, config.get("Colour", "Upper").split(',')))

# get threshold from config

self.lower_threshold = config.getint("Threshold", "Lower")

self.upper_threshold = config.getint("Threshold", "Upper")

def _thread(self, args):

image = args

# convert image from BGR to HSV

hsv = cv2.cvtColor(image, cv2.COLOR_BGR2HSV)

# only get colours in range

mask = cv2.inRange(hsv, self.lower_colour, self.upper_colour)

# obtain colour count

colour_count = cv2.countNonZero(mask)

# check whether to stop thread

if self.is_stop: return

# respond to colour count

if colour_count < self.lower_threshold:

self._text_to_speech("I just feel sad")

self._display_emotion(SAD)

elif colour_count > self.upper_threshold:

self._text_to_speech("I'm so happy!")

self._display_emotion(HAPPY)

Our Happy Colour feature inherits from multiple base classes, so as to obtain common functionality for threading, text to speech and emotion. It also makes use of the Python ConfigParser to load a colour range for its happy colour (in this case, green), along with Happy and Sad threshold values.

The _thread method is where all the shit happens, as a vicar would say. We use OpenCV to convert the webcam image into HSV format, then use our colour range to obtain an image mask. We can count all the non-black pixels in the mask to determine how much green is in front of the webcam.

If there is not much green in front of the webcam, as is the case with the globe, then Rocky Robot says “I just feel sad” and displays a sad emotion (she cries tears and drops her head).

If there is lots of green in front of the webcam, as is the case with the plant, then Rocky Robot says “I’m so happy!” and displays a happy emotion (she bobs her head and twinkles her eyes).

If there is a bit of green in front of the webcam, as is the case with the teddy, then Rocky Robot does nothing. She is simply content to blink her eyes.

The OpenCV tutorial Changing Colorspaces was a great help in putting the feature together.

Here’s the Happy Colour config file settings:

[Colour] Lower=58,50,50 Upper=78,255,255 [Threshold] Lower=500 Upper=15000

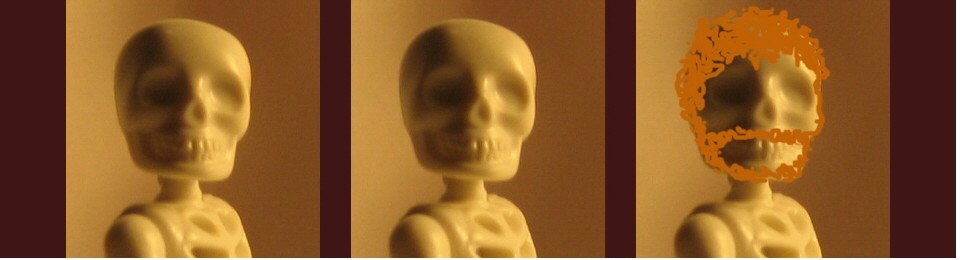

And here’s the webcam images and masks…

The scene, with no objects:

As you can see, the mask is black. Which means that there is no green detected in the image.

Also, notice the 2D marker on the table. This is the pattern which Saltwash – my Python Augmented Reality application – renders the 3D robot upon.

Next up, the teddy bear:

Yes, the mask shows the green teddy bear being detected. Not enough green pixels to topple the Happy threshold, but enough to keep Rocky Robot from feeling sad.

Now for the globe object:

Only the odd green pixel being detected. The robot is sad.

And finally the big green plant:

Hurray! Lots of green leafy goodness. The Happy threshold of 15000 has been breached and Rocky Robot dances with joy.

Please check out the SaltwashAR Wiki for details on how to install and help develop the SaltwashAR Python Augmented Reality application.

If you are wondering how I added emotion to the robots, check out my post Robot emotions with SaltwashAR.

Hello,

I have gone through your entire Saltwash AR tutorial and I am able to run the application in Desktop. Is it possible to run Saltwash AR on Android or IOS?

Hi Devesh, no I haven’t ported it to Android/IOS. If anyone fancies giving it a go, be my guest!

Pingback: AugReal Science – Remixing the Book